Confluence vulnerability, a tale of catching active exploitation in the wild

Posted 07 Sep 2021

At SensorFleet we often run trials in co-operation with our customers and partners, and the new codebase is tested in different virtualized environments and partner networks to validate fixes and to test new features. In this case we got interesting results and one could say we were a bit lucky, or just-in-time as we programmers say.

In this blog post I summarize how a simple test with a SensorFleet Sensor in partner infrastructure yielded the detection of exploitation of the Atlassian Confluence OGNL injection vulnerability (CVE-2021-26084).

The sprint meeting

One of the tasks we selected in the sprint meeting for the week of August 30th was pairing a Sensor with Microsoft Azure Sentinel in order to further validate and test sending relevant alert events to Sentinel. This also helps us to home in on the best workflow for the Sentinel by “doing it yourself”, in other words by eating your own dog food.

Additionally the sprint included a task where we enabled the community-id attribute to the Suricata EVE-log events by default.

Setting up

As the first set of tasks of the week I selected a test platform for the new features and deployed updated package versions to a test Sensor running in an ISP environment. This Sensor sits on the network edge and runs Suricata IDS Instrument with the Emerging Threats Open ruleset which has been optimized for the deployment. The Instrument was also configured to send Suricata alert events to Sentinel with just a few lines of configuration as seen in our previous blog post.

Sentinel provides a query language called Kusto Query Language which allows the user to construct very flexible and rich queries across datasets. Personally I do not have much experience with it but the basics are really easy to get into. After a while I had constructed queries which returned results of high priority alerts. Actual complex logic is abstracted behind a custom function but for the “end-user” the query is fairly simple:

let SeverityLevels = dynamic([1, 2]);

let Directions = dynamic(["in", "out"]);

GetAlertInfo(IgnoredCategories, SeverityLevels, Directions)

| sort by timestamp

Since the Sensor is located on the provider edge and many of the protected networks are directly exposed to the Internet the initial ruleset was way too noisy to raise alerts on. Actual attempts are something that we want to log but we don’t want them as actionable events. As a good compromise I decided to test a query where only alerts that have an outgoing connection are actionable and should be looked at. If the query would have looked good I would make a Sentinel rule to automatically create incidents based on it but at this point I wanted to validate that it produces good data first, which in normal case means no results.

Evening after the set-up

Sometimes you get that nagging “hmm I wonder if it works” feeling and want to check the results after the usual work hours. And sometimes it pans out like in this case…

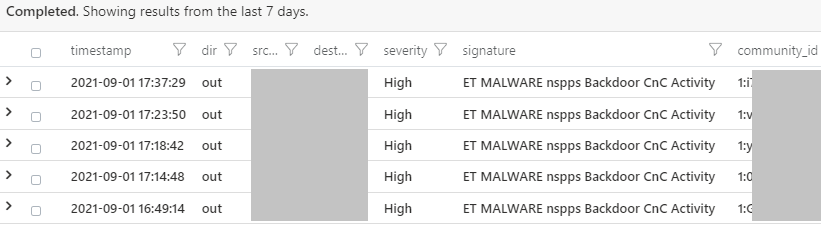

At about 20:30 that evening I made the sentinel query again which earlier during the day returned no results and saw something strange:

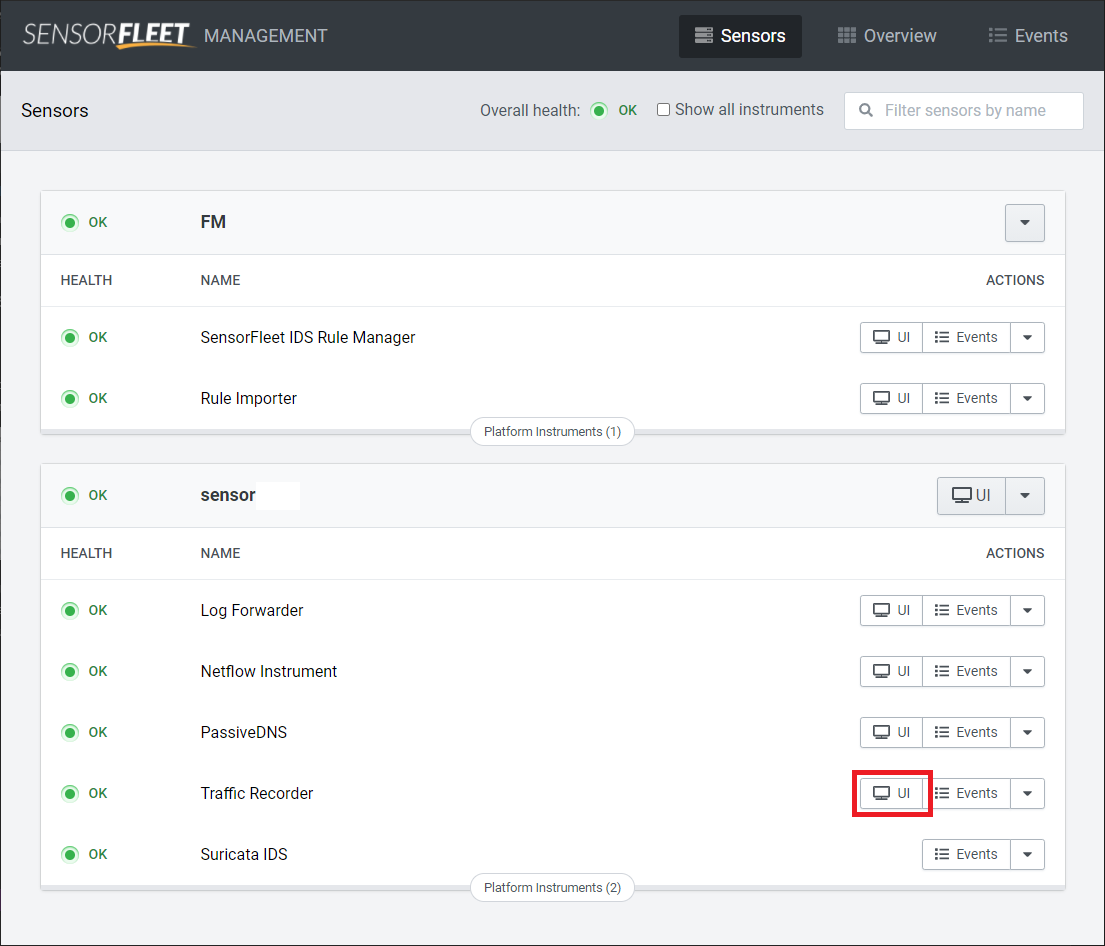

Alright, so we have a match. I looked up the reference on the Suricata rule and found a malware analysis done by IronNet. So now I understand what kind of detection I am dealing with. Then I thought this would be an excellent time to test the enabled community-id feature as well so I opened the SensorFleet Fleet Management and selected the Traffic Recorder Instrument UI from there.

SensorFleet uses Arkime for traffic recording. The Sensor in question is in a filtering mode where not all traffic is recorded and only traffic related to IDS hits will be recorded.

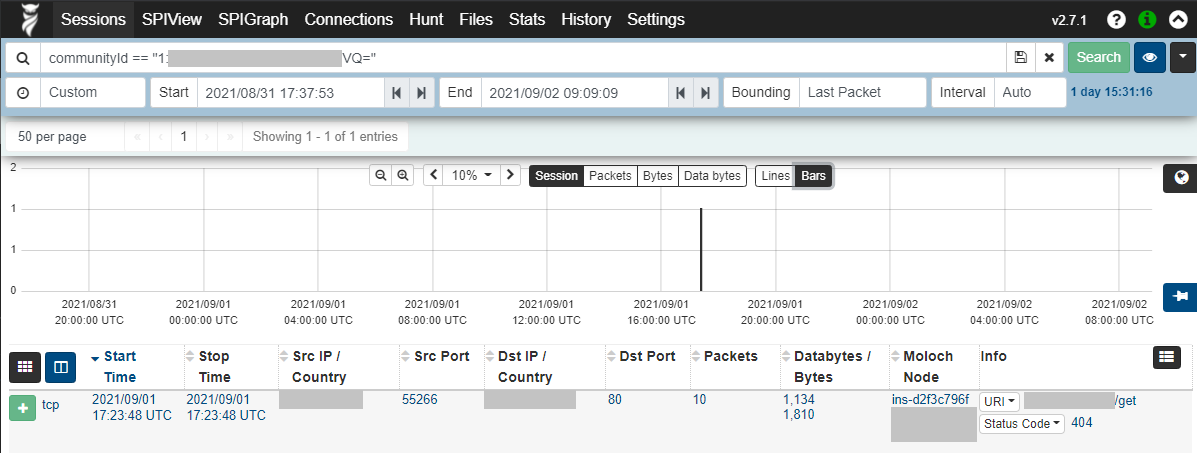

Sensor maintains a 10 second in-memory ring-buffer on the recorder to ensure that the traffic that fires the IDS alert is also recorded. Thanks to the community-id which comes with the alert I could make a lookup for the single flow that created the alert in the Arkime UI by just copy-pasting the community-id to Arkime search box.

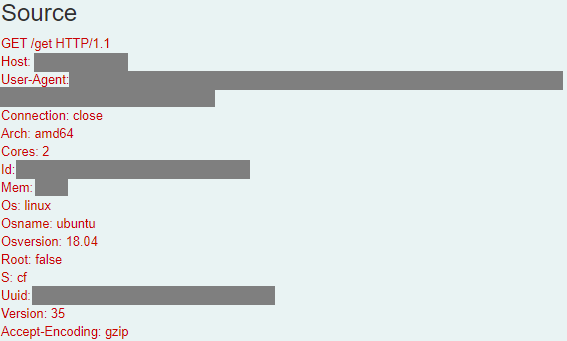

Expanding the flow yields some headers that are very much similar to the ones found on the malware analysis by IronNet:

And at this point I could with very high confidence say that this is a true positive. So I proceeded to contact the party who was responsible for the server in question and did a quick triage and analysis in which system logs and malware artifacts were collected. The virtual machine in question was also restored to a recent snapshot before the compromise.

Some additional post-incident analysis was also done by looking at records from the Netflow Instrument where we could find the initial point of compromise and subsequent malware payload fetches. This provided us with some IoC IP addresses which we shared with our close community and immediately found that another Confluence instance was compromised using the same IoC IP addresses. The two Confluence instances were compromised within a few seconds of each other which led us to believe this might be a larger bot campaign so we attempted to make a lot of noise with our peers to ensure they had their instances patched or at least temporarily firewalled to prevent any personal (or corporate) data from being leaked. We also shared our IoCs, logs etc. with CERT-FI.

Lessons learned

Sometimes the simplest of tests and trials of new tech can yield fast and visible results. In this case we were able to detect and mitigate the active usage of CVE-2021-26084 by a “secondary” C2 traffic detection.

In the end it would seem that we caught the successful exploitation attempt of that Confluence instance within an hour or so just by this SensorFleet Sensor - Sentinel testing setup, and it is always great to be able to do cool stuff with the tools we develop ourselves! The query that was written is now used as an automated task which creates incidents if it sees any hits. The setup also worked out as a good example on how different Instruments, namely Suricata IDS, Traffic Recorder and Netflow in this case, augment the detection data provided by one Instrument and aid in forensics.